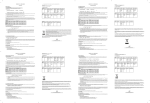

Download Automatic analysis of eye tracker data

Transcript