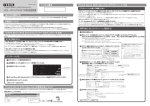

Download Distributed Application Control System (DACS)

Transcript