Download SPECpower_ssj2008 User Guide

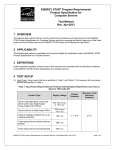

Transcript